From Big Data to Smart Data

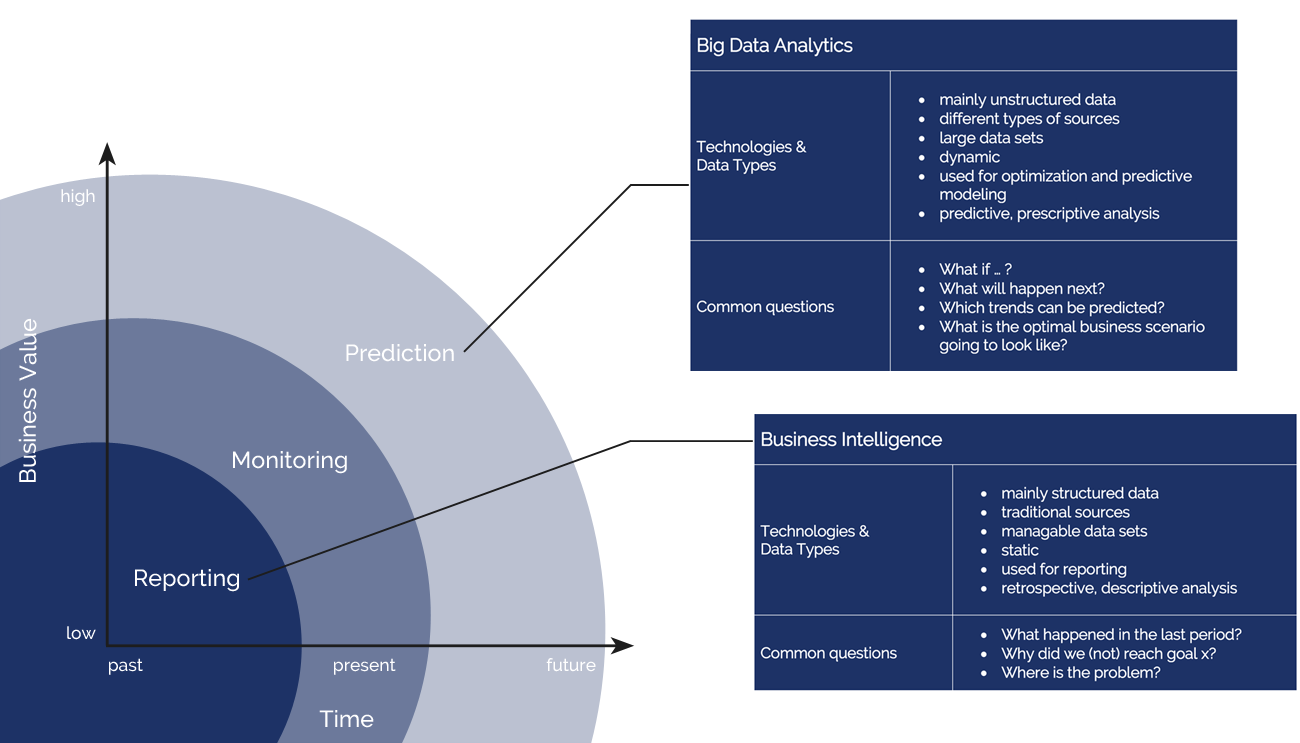

In many companies large amounts of data are generated every day in mutually heterogeneous systems. As a result, different formats are used and the storage often takes place in unstructured form. These circumstances make it difficult to find the right information and to use it as a tool to formulate strategies and make effective decisions.

In many companies large amounts of data are generated every day in mutually heterogeneous systems. As a result, different formats are used and the storage often takes place in unstructured form. These circumstances make it difficult to find the right information and to use it as a tool to formulate strategies and make effective decisions.

We offer you flexible and easy-to-use solutions for gaining insight into operational and strategic company management, thus enabling an efficient organization of business processes. Our expertise lies in the analysis, design and implementation of big data applications.

In contrast to natural raw materials such as oil, coal or natural gas, the raw material of the 21st century “data” continues to grow continuously. This growth will increase exponentially with the introduction of IoT solutions in the future. The goal is therefore to prepare Big Data so that it can be upgraded to SmartData in order to develop its entire economic potential.

KPI-Monitoring und Reporting mit dem ELK Stack

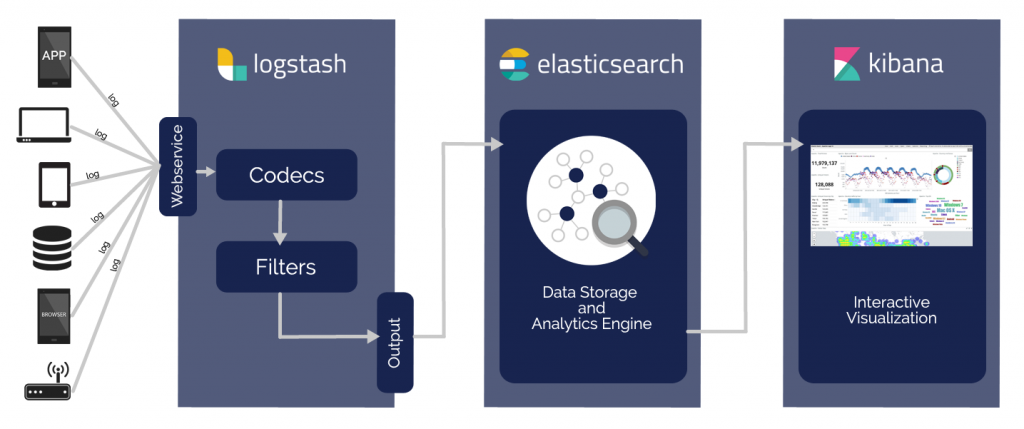

Our solutions are characterized by high security and performance. We work within our solutions with the ELK stack of Elastic (www.elastic.co) . We log the data of the apps to be examined via our web service and process them using Logstash – an open source software for processing data streams. The special feature is that they can be processed at the same time, although they come from different sources. The data is encoded, filtered and then stored in the Data Storage & Analytics Engine Elasticsearch. A fast and flexible engine allows search queries to be started in real-time, so we can work quickly with the insights gained. These are then interactively visualized by means of Kibana. This makes the events and structures of the data set easy to understand. A combination of the Big Data framework Apache Hadoop® with the ELK stack is also possible. Apache Hadoop® is used in the big data field to process large amounts of data and has the special property of continuous resilience. Thanks to this flexibility, our solutions can be adapted to your needs and wishes.

Our solutions are characterized by high security and performance. We work within our solutions with the ELK stack of Elastic (www.elastic.co) . We log the data of the apps to be examined via our web service and process them using Logstash – an open source software for processing data streams. The special feature is that they can be processed at the same time, although they come from different sources. The data is encoded, filtered and then stored in the Data Storage & Analytics Engine Elasticsearch. A fast and flexible engine allows search queries to be started in real-time, so we can work quickly with the insights gained. These are then interactively visualized by means of Kibana. This makes the events and structures of the data set easy to understand. A combination of the Big Data framework Apache Hadoop® with the ELK stack is also possible. Apache Hadoop® is used in the big data field to process large amounts of data and has the special property of continuous resilience. Thanks to this flexibility, our solutions can be adapted to your needs and wishes.

Natural Language Processing (NLP)

If the aggregated data contains text, for example, information can be obtained with Natural Language Processing (NLP).

Here, human language is analyzed by computers. NLP algorithms are often based on a combination of different methods of linguistics, computer science and artificial intelligence. For example, NLP is a large part of machine learning. From a cognitive perspective, a learning process describes the processing of information that is stored and retrieved as required. In contrast to classical algorithms, machine learning is a continuous process. An algorithm is enriched by more and more information. This in turn leads to an automated optimization of the results.

The characteristic tasks that are solved with NLP include: The recognition of morphological and syntactic connections. For example, further conclusions can be drawn which lead, for example, to sentiment analyzes, part-of-speech tagging and named entity recognition. These analyzes are carried out through tokenization, lemmatting, Word2Vec, and neural networks. The flexible use of these open source tools allows the development of various application areas, which can be adapted to your needs.